A lockdown-based approach to online misinformation

Envisioning an effective social platform oversight board

First off, let me hype two things I’m working on. First, tonight, Saturday night, I’m hosting a get together for socialists on the VC-heavy audio app Clubhouse. I think it’s fun and interesting to bring socialist thought into unfriendly spaces that the privileged have access to. If you’re on the app stop by tonight.

Second, I’m putting together a regular reading group on future visions of the economy, particularly looking at cooperative models for creating sustainable businesses in and outside of tech. I’m really excited about the guests we have lined up for the group and the topics we’re going to cover. We’ll meet monthly starting in January. I hope that this group could grow into a community of technologists and workers interested in building alternatives to capitalist organizational structures. Sign up to get infrequent emails about topics and meetings.

Thanks, and on to the letter.

This month we saw the Facebook Oversight Board accept its first cases and we are immediately learning the limits of the external Supreme Court approach. Particularly we are seeing that the board is not empowered to act fast or make broad decisions. Instead, it adjudicates particular cases on a multi-month timeline.

Each case will be referred to a five-member panel that includes one person from the same region as the original content. These panels will make their decisions — and Facebook will act on them — within 90 days. The oversight board, whose first members were announced in May, includes digital rights activists and former European Court of Human Rights judge András Sajó. Their decisions will be informed by public comments.

I think there is something valuable about an external board that is not under fiduciary duty to Facebook the way Facebook's Board of Directors is. But I'm not convinced that the important question in online communication is whether a particular piece of 𝜀-sized content is allowed or not. By the time a piece of misinformation content has gone viral and been adjudicated, many things have gone wrong and doubtless hundreds or thousands of similar pieces of content have succeeded as well. I think this oversight is operating at the wrong level.

Getting a handle on misinformation is going to require product-level changes to social software. Today's most popular social software seems purpose-built to spread misinformation as rapidly as possible. As social network creators got better at measuring the way people use their products, social software shifted from the 1:1 communication model of instant messaging and SMS to a broadcast model built on feeds. Critical metrics like time spent and ad revenue are all juiced by giving users tools to drive the most engagement with the least effort. This is, it turns out, exactly the kind of calculation an actor intent on spreading misinformation would make.

I'm going to propose a framework for how I understand misinformation campaigns. Then I will describe product-level mitigations that would materially impact misinformation via targeted but rigorous methods.

Framework

Let's break down the success determinants of misinformation broadly into three factors:

Motivated Actors

Public Environment

Product Structure

Motivated Actors x Public Environment x Product Structure = Success of misinformation campaigns

If you take away any of these factors, misinformation campaigns will not succeed.

Motivated Actors

Misinformation doesn't come from the ether; it is created by individuals acting in concert for a specific aim. Most often we hear about state-sponsored or ideologically-motivated actors spreading misinformation. In the state-sponsored case, we imagine call centers full of spammers who are paid to fill social media feeds with conspiracy theories and inflammatory rhetoric. Ideologically-motivated actors might be similar but act more autonomously and are sometimes paid and sometimes not.

But there are also misinformation actors motivated purely by profit. Gathering large ideologically-focused Facebook groups, for instance, is profitable, as the groups can be sold to other actors who mine their data or inject their own misinformation campaigns into them. It surprises people to find that the same actors will often start left-leaning and right-leaning groups and sell, for example, targeted T-Shirts in both.

Suppose the place in the misinformation formula we choose to attack is Motivated Actors. How do we find and stop state-sponsored misinformation actors, who are paid out of state budgets? I'm certainly skeptical that we can. Other states can conduct intelligence operations to discover and expose state-sponsored misinformation campaigns, but I don't see how they can be stopped. I certainly don't see how the US state can justifiably threaten other nation-states over their misinformation campaigns. A recent, surprisingly anti-imperialist New York Times Opinion piece cites Don H. Levin's argument in Meddling in the Ballot Box that the US "has interfered in foreign elections 63 times".

What about profit-driven misinformation actors? There might be some more leverage here. These actors are probably veteran spammers who have graduated from email spam campaigns to selling knockoff sunglasses in hacked Facebook accounts and now to ideologically-inflammatory misinformation content. These are people with technical skills who aren't working at normal, productive jobs and instead are producing misinformation campaigns. Making these campaigns less profitable would lessen the motivation to conduct them. One idea I heard from a Facebook product manager was to find areas where these misinformation workers are based, e.g. Eastern European countries, and invest in startups there that will offer better jobs than spamming.

I don't really think Motivated Actors is the leverage point through which to tackle misinformation. I present it here for completeness.

Public Environment

While motivated actors initiate misinformation campaigns, it is members of the public that propagate them through sharing. This asymmetry is what makes these campaigns powerful, and what makes social media engaging and profitable in general. Clearly not just any misinformation is propelled through the public at high velocity. There has to be a fit between the flavor of misinformation, the product itself, and the audience. There are doubtless niches of UFO conspiracy theorists empowered by social media, but the Public Environment does not match UFO conspiracy theories sufficiently for the conspiracies to grow to the size and impact of, say, QAnon.

So what properties of the public environment lead to fit with particular misinformation? I can think of several.

Social Immune System Deficiencies

There's a reason older people fall for misinformation campaigns more readily. They grew up in an environment where media was controlled by a smaller set of gatekeepers than it is today. While the CBS news with Walter Cronkite was certainly not ideologically neutral, it was at least singular. There were few competing narratives available through media, which gave us the collective illusion that there is an objective truth we can all agree upon. Today, you can find any community you want that will support any fringe view you want. But more importantly, these communities can find you.

When misinformation content breaks into one's world, it looks just like any other content. On Fox News it adopts the aesthetics of the nightly news. On Facebook it shows up as a link preview just like the New York Times. If you grew up in this world, you understand the signs to look for to identify the actors causing you to interact with a piece of content. If this is all new to you, you might be prone to trusting what is on your screen.

It takes years to adjust our collective understanding of media. I imagine that this media maturity rate is normally distributed, and some people will lag. It doesn't help that social media in particular is often trying to deceive users by mixing paid advertising content with organic content; the same deceptive tactics are easily utilized by misinformation actors.

If we'd like to intervene in the misinformation problem at the level of Social Immune System Deficiencies, we could educate the public on media literacy. We could also pass regulations that require paid content to be more explicitly separated, including with transparency on who is paying for content. I should be able to see all the financial backers of the Washington Post just the same as I should an ideologically-motivated small media entity.

Material Conditions

I'll take it as an axiom that healthy people with healthy minds don't spread misinformation. If you have the time and mental space to understand the world, your marvelous human faculties are quite up to the task of making sense of your environment.

But that is not the situation we have today. We are ruled by despots in a sham democracy. Our environment certainly isn't natural as in it is nothing like the environments our brains evolved to live in. Most people in the world live quite precarious lives under late capitalism. About 60% of Americans live paycheck to paycheck. The gig economy has been exposed as merely white people discovering the institution of servants. The ruling elite actually are rife with pedophiles who prey upon working class young women to sexually exploit, and there are no social repercussions for pal'ing around with predators like Jeffrey Epstein and Bill Clinton.

This environment does not fit well into our minds. The contradictions of poverty in a world of plenty, of every form of exploitation conducted by the supposedly virtuous, breaks our attempts at using our faculties to understand the world. This is fertile ground for misinformation and conspiracy theories.

If we want to address misinformation campaigns through the vector of material conditions, well, then we have to address material conditions. That means a fairer distribution of society's wealth. It means holding the powerful accountable. We need to construct societies that are compatible with human nature. I know that capitalism, an inherently contradictory system, is not capable of answering these contradictions. A transition to socialism is the best way of addressing material conditions that I have studied, but reasonable people disagree.

Biases

Finally we should address the actual flaws in human reasoning. We seem to carry with us a natural xenophobic bias against people unlike us. This means we are prone to social versions of the attribution error; we see the best in people like us and the worst in people different from us.

Misinformation that plays to these biases is primed to move through social networks with the highest possible velocity. What you see around the world today, and I am sure it is coming here soon, is ad hoc groups forming on apps like WhatsApp to propagate stories about enemies, like members of ethnic minorities or abstract enemies like antifa, meant to enflame racial tensions. Sometimes, these groups take action and it leads to murder.

If we want to address misinformation campaigns by reducing human biases, we should take on anti-racist work. Material conditions are inseparable from anti-racist work, as racial animosity is the first tool oligarchs reach for when impoverishing a people.

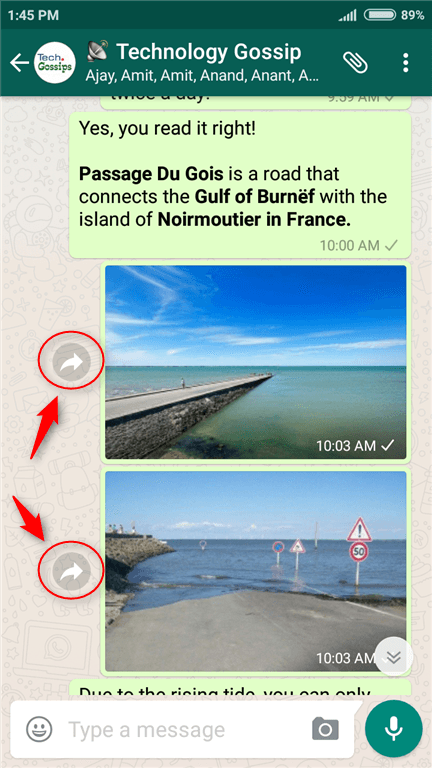

Product Structure

iMessage is not a particularly good vector for spreading misinformation while WhatsApp is. The reasons are subtle but clear once you see them. iMessage barely has group messaging functionality (or at least it is thoroughly broken when e.g. talking to non-iPhone users). This makes it a poor tool for broadcasting. If you'd like to share a photo or link from one iMessage conversation to another, you have to long press, then copy, then open another conversation, then paste the content. It's clear that number of content shares is not a metric that the makers of iMessage have optimized very much. In contrast, WhatsApp is a pretty powerful tool for broadcasting. Groups are reliable and full-featured on WhatsApp, allowing up to 256 members and by default sending members a push notification upon every message. There are share/forward buttons all over the product. Clearly the makers of WhatsApp believe that one of their goals is to increase the amount of content forwarded on the platform.

Similarly, some products are built for broadcasting to strangers while others are built to engage more deeply with a known community. Social networks built on asymmetric relationships like Twitter's follow model assume very little trust between users. Networks built on symmetric relationships, like early Facebook, assume more trust. Today, almost all large social networks favor a broadcast model, as that has outcompeted small network models for investment and user time.

These kinds of decisions are evident throughout social products. By optimizing the user interactions required to share content, you increase the velocity of content through the network. Content that thrives in high-velocity environments will succeed.

If you've been following me for a while, you know that I think product structure is the place to address misinformation campaigns. I believe the industry-wide devotion to optimizing first engagement metrics (likes and shares) and later time spent has led to product features that may as well be purpose-built for spreading misinformation campaigns. I think a cooling down of social content velocity is necessary, and it could be done in a minimally invasive way.

Digital Lockdowns

My proposed solution boils down to limiting or disabling certain product features within regions and around topics where misinformation is rife. The various social features of a product should be broken into groups and roughly ranked along a spectrum of how much potential for abuse they carry versus the value they provide. In areas where misinformation is more rampant, the top end of the spectrums gets cut off.

Social platforms already use this strategy in an ad hoc fashion. In 2018 WhatsApp limited message forwarding to only 5 users at a time in India in response to misinformation campaigns. The limit was 20 elsewhere, and in 2019 WhatsApp brought the limit of 5 to all users. I propose doing more of this kind of limitation and applying the strategy to topics as well as regions.

Social platforms have lots of knobs to tweak like this:

Prioritizing original native content over links (photos of my cat instead of memes and media articles)

Hiding share buttons behind dropdown menus or requiring an explicit copy/paste to share

Throwing up roadblocks to the share interaction like Twitter has done

Slowing distribution of content related to a particular topic, like an election

Prioritizing symmetric relationship (friend) content over asymmetric relationship (follow) content

There's a debate, rarely engaged with in good faith, about whether social content producers are entitled to have their content circulate to users who have expressed some interest in seeing their content, e.g. by following them. Conservatives in particular concoct theories about being censored when not all of their followers see the content they post. These alleged "shadowbans" don't actually occur on the major social platforms, but I believe the approach has merit. I do not consider non-circulation or "blackholing" to be censorship.

Suppose a politician repeatedly posts misinformation campaigns, sometimes calling for violence. If this politician's content were blackholed but not deleted, they still have quite a platform and receive free hosting for their content. What they don't get, what they aren't entitled to, is the free distribution from the platform. Hosting is a relationship between the platform and the producer whereas distribution is a relationship between all producers, the platform, and the consumer. There's just a lot more going on there and it's hard to argue that the user would be better-served by seeing misinformation in their ranked feeds than any of the other available content.

As a concrete example, probably most broadcast content about elections should be deprioritized in ranked feeds shortly before an election. The potential for abuse is very high and no one needs reminding that an American election is occurring. If users really want broadcast information about an election they can go directly to a media entity's website or social media page. Users can, of course, converse with their friends on social platforms about elections, but broadcasting of viral content and links to external entities could be limited.

But who decides when to activate a topic-based or regional lockdown? In my mind that is actually the value of an external oversight board. With different incentives, an external board can identify different threats than the platform owners can. A truly impactful Facebook Oversight Board would employ experts to measure misinformation flows across region and topic and identify trouble spots. The external oversight board should build a transparent framework for evaluating the severity of misinformation. It could use a color code scheme similar to the system states use to evaluate the severity of coronavirus infections in the population. Content in 'purple zone' topics would lose high-velocity broadcast features. Putting this framework in place ahead of time also helps remove bias from the actions of this proposed oversight board, as there should be as much objectivity in the evaluation guidelines as possible.

This external pressure would also drive social platforms to continue investing in their efforts to automatically manage and structurally discourage misinformation. I am skeptical of automation here–I don't think people will like the result and in 2020 we all know that automation through AI is often just automated application of human biases, but there is a place for automated enforcement as topic determination for content is obviously a machine learning problem. If the oversight board declares an entire election to be in the 'purple zone', cutting off high-velocity sharing features around the topic, social platforms will be highly motivated to get the problem under control themselves so they can continue delivering value as a place to engage in civic discussions.

Mark Zuckerberg has called for more regulation of social products: “I believe we need a more active role for governments and regulators. By updating the rules for the internet, we can preserve what’s best about it – the freedom for people to express themselves and for entrepreneurs to build new things – while also protecting society from broader harms.” This is a nice thing to ask for 15 years into winning a monopoly and privatizing the gains from these wins. This is another instance of the regulation arbitrage that defines Silicon Valley since 2000. "Innovators" race ahead of the public and the state to soak up all the surplus value they can, then when problems inevitably arise it's the state's fault for not keeping up with the externalities, which was, of course, the entire premise and strategy of the successful private venture.

The Facebook Oversight Board is Facebook's simulacrum of government oversight. Facebook's billionaire Board of Directors has defined the Oversight Board the way they like to see the state: toothless and slow-moving. All the important decisions remain under Facebook's control. The change we want to see involves social products themselves, but the decision-making processes that create these products are entirely privatized into corporate hierarchies. There is no space for the public except as an experiment population. The place to address the social ills of the internet is earlier in the process. Not products built by committee but organizational structures that reflect the stakeholders of social products: workers and the public, not absentee shareholders. An oversight board would be a helpful part of the formula in addressing misinformation, but it is only one piece.