The problem with AI misalignment

A new art installation in the Mission gives the misalignment problem a physical address

This month's Silicon Valley Intellectual buzzwords are: existential risk, artificial general intelligence, values alignment, and safeguards. Just throw these words into a jumble of sentences and you will be taken seriously as a Deep Thinker concerned about the Big Problems. There's no need to investigate any further; in fact, investigating what is transpiring in actual history is for the little people. Real serious SV intellectuals don't trifle themselves with technology or society as they actually exist. They construct elaborate arguments in their expansive mind palaces. They understand that a technology exists in itself and for itself; the humans who create and wield it are merely incidental.

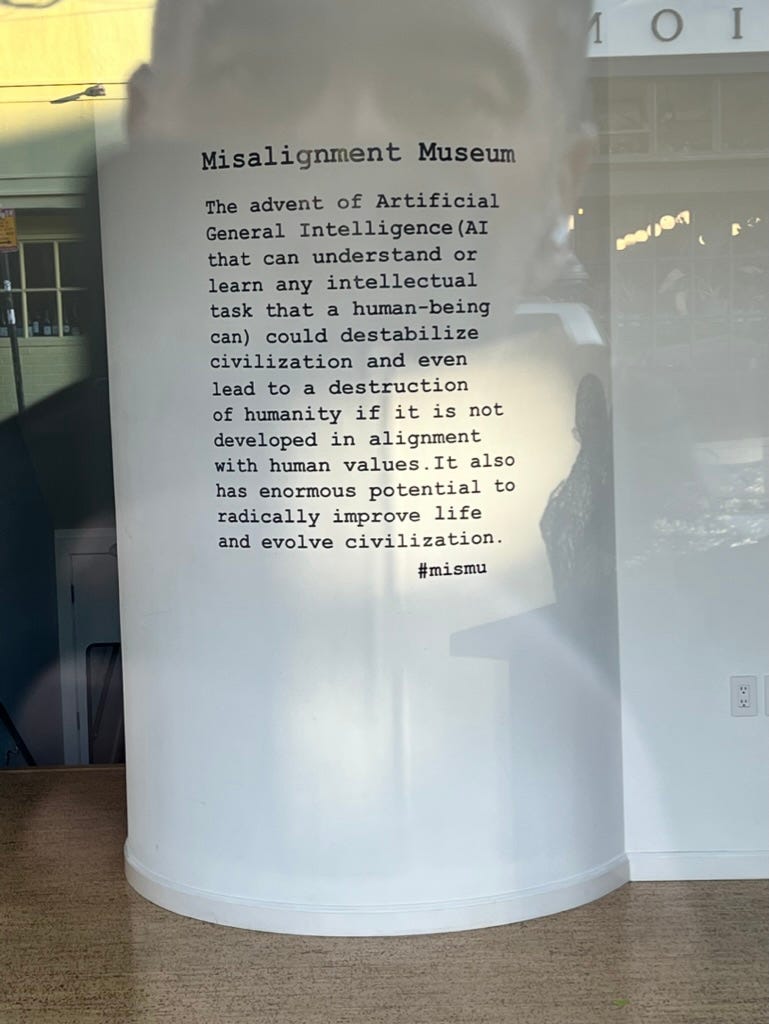

Starting this week, this very Valley-esque mode of thought has a physical instantiation (mere blocks from my loft). The Misalignment Museum is a new art installation that hopes to "inspire and build support to formulate and enact risk mitigation measures we can take to ensure a positive future in the advent of [artificial general intelligence]". This space is a physical representation of an analysis generally referred to as the "misalignment problem".

The misalignment problem in AI can be formulated in this way: In the future, work on artificial general intelligence (AGI) is likely to be successful and will give birth to a superintelligence with no analogue in human history. This superintelligence will hold extreme power over human lives. The values of the superintelligence, insofar as this new entity can be said to have morals or values, may or may not be compatible with any human values, including even the continued survival of the human race. As AGI is developed, it should be developed in a way that maximizes the likelihood that the new superintelligence possesses values that are compatible with human values.

Beyond illustrating the widespread usage of amphetamines among AI enthusiasts, this argument does not succeed in telling us much about AI and its development. It makes wild estimates about the speed and specific path of technological development, which has historically been very difficult to predict. It is not very specific about what is meant by human values; which group of humans are eligible to have their values encoded in forthcoming AI? It supposes that a superintelligence can meaningfully exist, and that this potentially incomprehensible entity would have something we could squint at and call values at all. I'm going to focus on what I view as the most important and most telling shortcomings of this analysis: how the misalignment problem elides any actually existing humans or social relations between humans.

Misalignment as an analysis fails the same way a lot of tech analysis fails: it considers a technology separate from the social relations that lead to its creation and ultimately control it. AI is not being developed by disinterested actors, it is currently being developed within profit-seeking corporations as a tool to drive revenue for such corporations and ultimately to accrue profits for their shareholders. Rather than interrogate who is building AI for whose benefit, the misalignment analysis assumes these questions do not matter because the teleology (analysis of a system's purpose contained in itself rather than the causes which give rise to it) of AI is fully self-contained.

The intellectual elites of Silicon Valley commit the teleology error constantly. As a relevant example, every labor-saving application of automation technology is heralded as the end of toil, yet the average number of hours a worker works in a year has been constant for nearly a century in the US. To avoid the teleology error, we must instead analyze a technology in the context of the social relations around it. Automation technology is controlled by people who own capital and seek to acquire more capital. It is in their interests for productivity gains to drive more profit rather than less global labor time, so those are the ends to which automation technology is applied. AI will be developed and deployed under these same social relations, so the results will be analogous. If we want to apply technological development towards other ends, like the end of toil, we must change the social relations that drive the development of technology.

The material problems caused by AI will arrive far before any superintelligence, and the nature of any future and more advanced AI will depend on the path from here to there. It is critical to ground our analysis in AI as it is actually being developed, and that includes who is developing it and for what ends. Unsurprisingly, we do not have to look very deeply to see troubling portents ahead in AI's development.

OpenAI, a leading AI firm, last week announced a new partnership with the consulting firm Bain & Company. Bain claims that with OpenAI's help, they will "pinpoint the generative AI use cases that will create the most value, rapidly deploy a proof of concept, then implement the capabilities across your operating model, business processes, and data assets." While this is vague, we can look at what else Bain does to understand what kind of partners AI developers are finding a foothold with. Recently Bain was in the news for directing Meta's round of layoffs (downsizing management is a common service offered by consulting firms). The linked site, which advertises that it can help you "land that dream consulting offer," lays it out clearly: "Companies often bring in firms like Bain to advise on layoff decisions so they have an external party to blame if the cuts don’t have the desired effect, or if the company’s core capabilities suffer a decline in quality." Major tech platforms already obscure their culpability in the content distribution they enable behind algorithmic systems (examples abound). It is easy to imagine future management decisions being laundered through both consulting firms and the AI systems they use to direct decisions that impact workers, like layoffs.

Beyond its partnerships, we can look at who owns OpenAI. Its investors include Peter Thiel, longtime tech villain and recent Christian end times crank, as well as the outsourcing firm Infosys. The confluence of right-wing cultural reaction and labor-disciplining firms like Bain and Infosys tell us who is in the crosshairs of AI firms: the working class. Like most every labor-saving automation tool developed to date, AI will soon be used to discipline labor and to intensify the labor process while not reducing working hours or raising wages. In previous decades such business leaders would at least pay lip service to their role as “job creators.” Now, the mask has slipped off and OpenAI is making it clear they are coming to take away your job.

Despite the pressing need for relevant analysis of AI technology, there is nothing about labor, ownership, or human society to be found in the statement of the misalignment problem or in the Apple Store-esque Misalignment Museum. The lack of grounding in reality reveals the misalignment problem to be nothing but an idle thought experiment, ultimately lacking in the intellectual weight needed to understand actually existing AI technology and its impacts. Rather than give the misalignment argument its own physical address, let’s abandon it where it originated: in “rationalist” forums full of navel-gazing nerds.